Theory for Generative Models

ABSTRACT

Generative models like diffusion models and GANs have exploded in popularity as powerful ways of modeling real-world data and form the backbone of technologies like DALL-E 2. Yet when it comes to provable guarantees, we know very little about why these frameworks are so effective or how to articulate what it is they are accomplishing. In this talk I'll describe some recent progress in this direction.

In the first half, I'll give a proof that diffusion models can efficiently learn essentially any distribution D, even highly non-log-concave ones, assuming one can perform "score estimation" to reasonable accuracy. Previous works either incurred exponential dependence on parameters like dimension, or required strong distributional assumptions that preclude significant non-log-concavity.

In the second half, under the assumption that D is a parametric transformation of the Gaussian measure, specifically under a polynomial map, I'll show how to provably and efficiently learn D from samples without assuming one can perform score estimation. This result leverages the sum-of-squares hierarchy and reveals an intriguing connection to tensor ring decomposition.

SPEAKER BIO

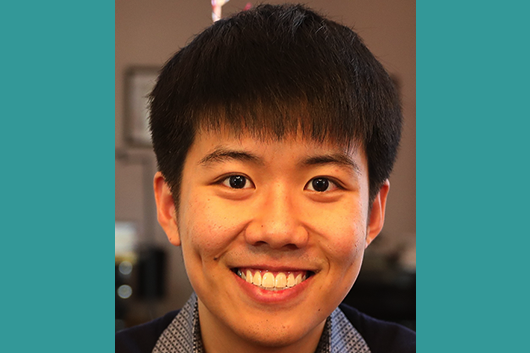

Sitan Chen is an NSF Mathematical Sciences Postdoctoral Research Fellow at UC Berkeley, hosted by Prasad Raghavendra. In the 2023-2024 academic year he will join Harvard as an Assistant Professor in Computer Science. He recently received his PhD in EECS from MIT under the supervision of Ankur Moitra. He has been the recipient of a Paul and Daisy Soros Fellowship, an Akamai Presidential Fellowship, and the Captain Jonathan Fay Prize. His research focuses on designing algorithms with provable guarantees for fundamental problems in data science, especially in the context of generative modeling, robustness, deep learning, and quantum learning.